Dear friends,

A student once asked me, “Can an AI ever love?”

Since the early days of AI, people have wondered whether AI can ever be conscious or feel emotions. Even though an artificial general intelligence may be centuries away, these are important questions.

But I consider them philosophical questions rather than scientific questions. That’s because love, consciousness, and feeling are not observable. Whether an AI can diagnose X-ray images at 95 percent accuracy is a scientific question; whether a chatbot can convince (or “fool”) an observer into thinking that it has feelings is a scientific question.

But whether it can feel is a question best left to philosophers and their debates. Or to the Tin Man, the robot character in The Wizard of Oz who longs for a heart only to learn that he had one all along.

Even if we can’t be sure that an AI will ever love you, I hope you love AI, and also that you have a happy Valentine’s Day!

Love,

Andrew

News

Phantom Menace

Some self-driving cars can’t tell the difference between a person in the roadway and an image projected on the street.

What’s new: A team led by researchers at Israel’s Ben-Gurion University of the Negev used projectors to trick semiautonomous vehicles into detecting people, road signs, and lane markings that didn’t exist.

How it works: The researchers projected images of a body (Elon Musk’s, to be precise) on a street, a speed-limit sign on a tree, and fake lane markings on a road. A Tesla on autopilot and a Renault equipped with Intel Mobileye’s assistive driving system — which rely on sensors like cameras and radars rather than three-dimensional lidar — responded by swerving, stopping, or slowing (as you can see in the lower left-hand corner of the clip above). The paper proposes three convolutional neural networks to determine whether an object is real or illusory.

- One CNN checks whether the object’s surface texture is realistic, flagging, say, a stop sign projected on bricks.

- Another checks the object’s brightness to assess whether it reflects ambient, rather than projected, light.

- The third evaluates whether the object makes sense in context. A stop sign projected on a freeway overpass, for instance, would not.

- The team validated each model independently, then combined them. The ensemble caught 97.6 percent of phantom objects but mislabelled 2 percent of real objects.

Behind the news: A variety of adversarial attacks have flummoxed self-driving cars. A 2018 study fooled them using specially designed stickers and posters. Another team achieved similar results using optical illusions.

Why it matters: A mischief maker with an image projector could turn automotive features designed for safety into weapons of mass collision.

The companies respond: Both manufacturers dismissed the study, telling the authors:

- “There was no exploit, no vulnerability, no flaw, and nothing of interest: the road sign recognition system saw an image of a street sign, and this is good enough.” — Mobileye

- “We cannot provide any comment on the sort of behavior you would experience after doing manual modifications to the internal configuration [by enabling an experimental stop sign recognition feature].” — Tesla

We’re thinking: The notion that someone might cause real-world damage with a projector may seem far-fetched, but the possibility is too grave to ignore. Makers of self-driving systemsshould take it seriously.

That Swipe-Right Look

In an online dating profile, the photo that highlights your physical beauty may not be the one that makes you look smart or honest — also important traits in a significant other. A new neural network helps pick the most appealing shots.

What’s new: Agastya Kalra and Ben Peterson run a business called Photofeeler that helps customers choose portraits for dating and other purposes. Their model Photofeeler-D3 rates perceived looks, intelligence, and trustworthiness in photos. You can watch a video demo here.

Key insight: Individuals have biases when it comes to rating photos. Some consistently give higher scores than average, while others may consistently give more random scores. By taking into account individual raters’ biases, a model can predict more accurately how a group would judge a photo.

How it works: Photofeeler-D3 scores the beauty, intelligence, and trustworthiness of a person in a photo on a scale of 1 to 10. The network was trained on more than 10 million ratings of over 1 million photos submitted by customers through the company website.

- Photofeeler-D3 learned each rater’s bias (that is, whether the person’s ratings tend to be extreme or middling) based on their rankings of photos in the training dataset. The model represents this individual bias as a vector.

- A convolutional neural network using the xception architecture learned to predict a score for each trait. (The score wasn’t used.) After training, the CNN used its knowledge to generate vector representations of input images.

- The model samples a random rater from the training dataset. An additional feed-forward layer predicts that rater’s scores using the bias vector and photo vector.

- Then it averages its predictions of 200 random raters to simulate an assessment by the general public.

Results: Tested on a dataset of face shots scored for attractiveness, Photofeeler’s good-looks rating achieved 81 percent correlation compared to the previous state of the art, 53 percent. On the researchers’ own dataset, the model achieved 80 percent correlation for beauty, intelligence, and trustworthiness.

Why it matters: Crowdsourced datasets inherit the biases of the people who contributed to them. Such biases add noise to the training process. But Photofeeler’s voter modeling turns raters’ bias into a benefit: Individuals tend to be consistent in the way they respond to other peoples’ looks, so combining individuals yields a more accurate result than estimating mean ratings while ignoring their source.

We’re thinking: We’d rather live in a world where a link to your Github repo gets you the most dates.

Tools For a Pandemic

Chinese tech giants have opened their AI platforms to scientists fighting coronavirus.

What’s new: Alibaba Cloud and Baidu are offering a powerful weapon to life-science researchers working to stop the spread of the illness officially known as Covid-19: free access to their computing horsepower and tools.

How it works: The companies have a variety of resources to support tasks like gene sequencing, protein screening, and drug development.

- Alibaba Cloud is providing access to its computing network as well as AI-driven drug discovery tools, datasets from earlier efforts to find drugs to combat SARS and MERS, and libraries of compounds. Researchers can apply by emailing Alibaba Cloud.

- In collaboration with Beijing’s Global Health Drug Discovery Institute, Alibaba Cloud is developing a platform to aggregate and distribute coronavirus-related information.

- Baidu offers LinearFold, a tool developed in collaboration with Oregon State University and the University of Rochester that rapidly sequences RNA.

Behind the news: Scientists worldwide are turning to AI to help control the outbreak. An effort led by Harvard Medical School is tracking the disease by mining social media posts. UK researchers used AI to explore properties of an existing drug that could be useful for treating the virus. Meanwhile, a U.S. company is using an algorithm to design molecules that could halt the bug’s ability to replicate.

Why it matters: The virus had infected nearly 45,000 people and killed more than 1,100 at press time.

We’re thinking: Donating compute, tools, and data to scientists fighting infectious diseases is a great idea. We hope other tech companies will pitch in.

A MESSAGE FROM DEEPLEARNING.AI

Explore federated learning and how you can retrain deployed models while maintaining user privacy. Take the new course on advanced deployment scenarios in TensorFlow. Enroll now

Fighting Fakes

A supergroup of machine learning models flags manipulated photos.

What’s new: Jigsaw, a tech incubator owned by Alphabet, released a system that detects digitally altered images. The organization is testing it with a dozen media partners including Rappler in the Philippines, Animal Politico in Mexico, and Code for Africa.

What’s in it: Assembler is an ensemble of six algorithms, each developed by a different team to spot a particular type of manipulation. Jigsaw put them together and trained them on a dataset from the U.S. National Institute of Standards and Technology’s Media Forensics Challenge.

- Jigsaw contributed a component that identifies deepfakes generated by StyleGAN.

- The University Federico II of Naples supplied two models, one that spots image splices and another that finds repeated patches of pixels, indicating that parts of an image were repeatedly copied and pasted.

- UC Berkeley developed a model that scans pixels for clues that they were produced by different cameras, an indication of image splicing.

- The University of Maryland’s contribution uses color values as a baseline to look for differences in contrast and other artifacts left by image editing software.

Why it matters: Fake images can deepen political divides, empower scammers, and distort history.

- In the U.S., members of Congress have tried to discredit former President Obama with fake pictures purporting to show him shaking hands with Iranian president Hassan Rouhani.

- Scammers used doctored images of the recent Australian bushfires to solicit donations for nonexistent relief funds.

- Disinformation is known to have influenced politics in Brazil, Kenya, the Philippines, and at least a dozen other democracies.

We’re thinking: Unfortunately, the next move for determined fakers may be to use adversarial attacks to fool this ensemble. But journalists working to keep future elections fair will need every tool they can get.

What Love Sounds Like

Female giant pandas are fertile for only 24 to 36 hours a year: Valentine’s Day on steroids. A new neural network alerts human keepers when a panda couple mates.

What’s new: Panda breeders are struggling to lift the creatures’ global population, and tracking success in mating helps maintain their numbers. WeiRan Yan of Sichuan University, with researchers from Chengdu Research Base of Giant Panda Breeding and Sichuan Academy of Giant Panda, developed CGANet, a speech recognition network that flags consummated unions based on panda vocalizations.

Key insight: Prior work discovered the relationship between panda calls and mating success. A preliminary model used hand-crafted features to recognize calls meaning, “Wow, honey, you were great!” CGANet uses features extracted through deep learning.

How it works: The researchers trained CGANet on recordings of pandas during mating season labeled for mating success.

- The model divides each recorded call into pieces and computes a frequency representation of each piece.

- It uses convolutional, recurrent, and attention layers in turn to find patterns that predict mating success in different aspects of the pieces and their interrelationships.

- It computes the probability of mating success for each piece, then sums the probabilities to generate a prediction for the call as a whole.

Results: CGANet’s predictions were 89.9 percent accurate, a new state of the art compared with the earlier model’s 84.5 percent. CGANet also substantially improved AUC (area under curve, a measure of true versus false positives).

Why it matters: Tracking a panda’s love life once required obtaining its hormones — a difficult and time-consuming feat. CGANet allows real-time, non-invasive prediction so keepers can give the less popular pandas another chance while they’re still fertile.

We’re thinking: For pandas, a happy Valentine’s Day is essential to perpetuate the species. Tools like CGANet could help save these unique creatures from extinction.

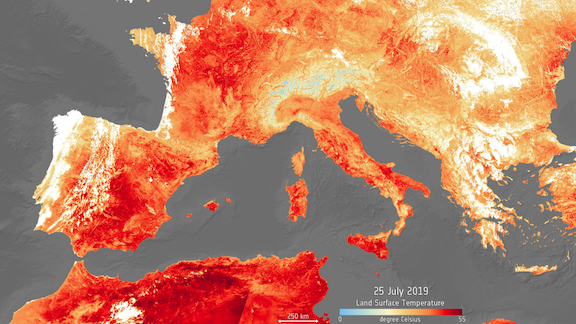

Extreme Weather Warning

Severe heat waves and cold snaps are especially hard to forecast because atmospheric perturbations can have effects that are difficult to compute. Neural networks show promise where typical methods have stumbled.

What’s new: Researchers at Rice University used a capsule neural network — a variation on a convolutional neural network — to forecast regional temperature extremes based on far fewer variables than usual.

How it works: Good historical observations date back only to 1979 and don’t include enough extreme-weather examples to train a neural network. So the researchers trained their model on simulated data from the National Center for Atmospheric Research’s Large Ensemble Community Project (LENS).

- Starting with 85 years’ worth of LENS data covering North America, the researchers labeled atmospheric patterns preceding extreme temperature swings by three days.

- Trained on atmospheric pressure at 5 kilometers, the model predicted cold spells five days out with 45 percent accuracy and heat spells (which are influenced more by local conditions) five days out with 40 percent accuracy.

- Retrained on both atmospheric pressure and surface temperature, the model’s five-day accuracy shot up to 76 percent for both winter and summer extremes.

The next step: By adding further variables like soil moisture and ocean surface temperature, the researchers believe they can extend their model’s accuracy beyond 10 days. That would help meteorologists spot regional temperature extremes well ahead of time. Then they would use conventional methods to home in on local effects.

Why it matters: Extreme temperatures are disruptive at best, deadly at worst. Advance warning would help farmers save crops, first responders save lives, and ordinary people stay safe.

Behind the news: Most weather forecasting is based on crunching dozens of variables according to math formulas. In its reliance on matching historical patterns, this study’s technique — indeed, any deep learning approach to weather prediction — is a throwback to earlier methods. For instance, the U.S. military used temperature and atmospheric pressure maps to predict the weather before the U.S. invasion of Normandy in 1944.

We’re thinking: Who says talking about the weather is boring?