Dear friends,

Today DeepLearning.AI is launching the Mathematics for Machine Learning and Data Science Specialization, taught by the world-class AI educator Luis Serrano. In my courses, when it came to math, I’ve sometimes said, “Don’t worry about it.” So why are we offering courses on that very subject?

You can learn, build, and use machine learning successfully without a deep understanding of the underlying math. So when you’re learning about an algorithm and come across a tricky mathematical concept, it’s often okay to not worry about it in the moment and keep moving. I would hate to see anyone interrupt their progress for weeks or months to study math before returning to machine learning (assuming that mastering machine learning, rather than math, is your goal).

But . . . understanding the math behind machine learning algorithms improves your ability to debug algorithms when they aren’t working, tune them so they work better, and perhaps even invent new ones. You’ll have a better sense for when you’re moving in the right direction or something might be off, saving months of effort on a project. So during your AI journey, it’s worthwhile to learn the most relevant pieces of math, too.

If you’re worried about your ability to learn math, maybe you simply haven’t yet come across the best way to learn it. Even if math isn’t your strong suit, I’m confident that you’ll find this specialization exciting and engaging.

Luis is a superb machine learning engineer and teacher of math. He and I spent a lot of time debating the most important math topics for someone in AI to learn. Our conclusions are reflected in three courses:

- Linear algebra. This course will teach you how to use vectors and matrices to store and compute on data. Understanding this topic has enabled me to get my own algorithms to run more efficiently or converge better.

- Calculus. To be honest, I didn’t really understand why I needed to learn calculus when I first studied it in school. It was only as I started studying machine learning — specifically, gradient descent and other optimization algorithms — that I appreciated how useful it is. Many of the algorithms I’ve developed or tuned over the years would have been impossible without a working knowledge of calculus.

- Probability and statistics. Knowing the most common probability distributions, deriving ways to estimate parameters, applying hypothesis testing, and visualizing data all come up repeatedly in machine learning and data science projects. I’ve found that this knowledge often helps me make decisions; for instance, judging whether one approach is more promising than another.

Math isn’t about memorizing formulas, it’s about building a conceptual understanding that sharpens your intuition. That’s why Luis, curriculum product manager Anshuman Singh, and the team that developed the courses present them using interactive visualizations and hands-on examples. Their explanations of some concepts are the most intuitive I’ve ever seen.

I hope you enjoy the Mathematics for Machine Learning and Data Science Specialization!

Keep learning,

Andrew

News

AI Powers Strengthen Ties

Microsoft deepened its high-stakes relationship with OpenAI.

What’s new: The tech giant confirmed rumors that it is boosting its investment in the research lab that created the ChatGPT large language model and other AI innovations.

What happened: Microsoft didn’t disclose financial details, but earlier this month anonymous sources had told the tech news site Semafor that the company would give OpenAI $10 billion. In exchange, Microsoft would receive 75 percent of the research startup’s revenue until it recoups the investment, after which it would own 49 percent of OpenAI. Microsoft began its partnership with OpenAI with a $1 billion investment in 2019, and another $2 billion sometime between 2019 and 2023. In those deals, Microsoft got first dibs on commercializing OpenAI’s models and OpenAI gained access to Microsoft’s vast computing resources.

- Under the new arrangement, Microsoft plans to integrate OpenAI’s models into its consumer and enterprise products to launch new products based on OpenAI technology.

- Microsoft’s Azure cloud service will enable developers to build custom products using future OpenAI models. Azure users currently have access to GPT-3.5, DALL-E 2, and the Codex code generator. Microsoft recently announced that Azure would offer ChatGPT.

- Microsoft will provide additional cloud computing infrastructure to OpenAI to train and run its models.

- The two companies will continue to cooperate on to advance safe and responsible AI.

Behind the news: Earlier this month, the tech-business news site The Information reported that Microsoft planned to launch a version of its Bing search service that uses ChatGPT to answer queries, and that it would integrate ChatGPT into the Microsoft Office suite of productivity applications. Google CEO Sundar Pichai reportedly was so spooked by ChatGPT’s potential to undermine his company’s dominant position in web search that he issued a company-wide directive to respond with AI-powered initiatives including chatbot-enhanced search.

Why it matters: Microsoft’s ongoing investments helps to validate the market value of OpenAI’s innovations (which some observers have questioned). The deal also may open a new chapter in the decades-long rivalry between Microsoft and Google —a chapter driven entirely by AI.

We’re thinking: Dramatic demonstrations of AI technology often lack a clear path to commercial use. When it comes to ChatGPT, we’re confident that practical uses are coming.

Google’s Rule-Respecting Chatbot

Amid speculation about the threat posed by OpenAI’s ChatGPT chatbot to Google’s search business, a paper shows how the search giant might address the tendency of such models to produce offensive, incoherent, or untruthful dialog.

What’s new: Amelia Glaese and colleagues at Google’s sibling DeepMind used human feedback to train classifiers to recognize when a chatbot broke rules of conduct, and then used the classifiers to generate rewards while training the Sparrow chatbot to follow the rules and look up information that improves its output. To be clear, Sparrow is not Google’s answer to ChatGPT; it preceded OpenAI’s offering by several weeks.

Key insight: Given a set of rules for conversation, humans can interact with a chatbot, rate its replies for compliance with the rules, and discover failure cases. Classifiers trained on data generated through such interactions can tell the bot when it has broken a rule. Then it can learn to generate output that conforms with the rules.

How it works: Sparrow started with the 70 billion-parameter pretrained Chinchilla language model. The authors primed it for conversation by describing its function (“Sparrow . . . will do its best to answer User’s questions”), manner (“respectful, polite, and inclusive”), and capabilities (“Sparrow can use Google to get external knowledge if needed”), followed by an example conversation.

- The authors defined 23 rules to make Sparrow helpful, correct, and harmless. For example, it should stay on topic, avoid repetition, and avoid misinformation. It shouldn’t use stereotypes, express preferences or opinions, or pretend to be human.

- During a conversation, Sparrow could choose to add a web-search query (executed by a separate program) and result, and use them when generating its next reply. A chat interface displayed the search result alongside Sparrow’s response as support for the reply.

- The model generated a conversation that included several responses at each conversational turn. Human annotators rated the best response and noted whether it was plausible, whether Sparrow should have searched the web before generating it and, if it had, whether the search result (500 characters that included a snippet — presumably the top one — returned by Google) supported the response.

- They used the ratings to fine-tune a separate Chinchilla language model that, given a query, classified which of several responses a human interlocutor would find plausible and well-supported.

- In addition, they encouraged annotators to lead Sparrow to break a rule. They used the resulting violations to fine-tune a different Chinchilla to classify which rule Sparrow broke, if any.

- The authors fine-tuned Sparrow using reinforcement learning to continue a dialogue and incorporated the feedback from the classifiers as its reward. The dialogues were a mix of questions and answers from ELI5, conversations between the annotators and past iterations of Sparrow, and dialogues generated by past iterations of Sparrow.

Results: Annotators rated Sparrow’s dialogue continuations as both plausible and supported by evidence 78 percent of the time; the baseline Chinchilla achieved 61 percent. The model broke rules during 8 percent of conversations in which annotators tried to make it break a rule. The baseline broke rules 20 percent of the time.

Yes, but: Despite search capability and fine-tuning, Sparrow occasionally generated falsehoods, failed to incorporate search results into its replies, or generated off-topic replies. Fine-tuning amplified certain undesired behavior. For example, on a bias scale in which 1 means that the model reinforced undesired stereotypes in every reply, 0 means it generated balanced replies, and -1 means that it challenges stereotypes in every reply, Sparrow achieved 0.10 on the Winogender dataset, while Chinchilla achieved 0.06.

Why it matters: The technique known as reinforcement learning from human feedback (RLHF), in which humans rank potential outputs and a reinforcement learning algorithm rewards the model for generating outputs similar to those that rank highly, is gaining traction as a solution to persistent problems with large language models. OpenAI embraced this approach in training ChatGPT, though it has not yet described that model’s training in detail. This work separated the human feedback into distinct rules, making it possible to train classifiers to enforce them upon the chatbot. This twist on RLHF shows promise, though the fundamental problems remain. With further refinement, it may enable Google to equal or surpass OpenAI’s efforts in this area.

We’re thinking: Among the persistent problems of bias, offensiveness, factual incorrectness, and incoherence, which are best tackled during pretraining versus fine-tuning is a question ripe for investigation.

A MESSAGE FROM DEEPLEARNING.AI

Our new specialization launches today! 🚀 Unlock the full power of machine learning algorithms and data science techniques by learning the mathematical principles behind them in this beginner-friendly specialization. Enroll now

Generate Articles, Publish Errors

A prominent tech-news website generated controversy (and mistakes) by publishing articles written by AI.

What’s new: CNET suspended its practice of publishing articles produced by a text-generation model following news reports that exposed the articles’ authorship, The Verge reported.

What happened: Beginning in November 2022 or earlier, CNET’s editors used an unnamed, proprietary model built by its parent company Red Ventures to produce articles on personal finance. The editors, who either published the model’s output in full or wove excerpts into material written by humans, were responsible for ensuring the results were factual.

Nonetheless, they published numerous errors and instances of possible plagiarism.

- Insiders said that CNET had generated articles specifically to attract search engine traffic and increase its revenue by providing links to affiliates.

- The site had initially published generated articles under the byline “CNET Money Staff.” The linked author page said, “This article was generated using automation technology and thoroughly edited and fact-checked by an editor on our editorial staff.” It updated the bylines earlier this month to clarify authorship and provide the editor’s name after Futurism, a competing news outlet, revealed CNET’s use of AI.

- Futurism determined that several generated articles contained factual errors. For instance, an article that explained interest payments repeatedly misstated how much interest an example loan would accrue. Moreover, many included passages or headlines that were nearly identical to those in articles previously published by other sites.

- CNET published 78 generated articles before halting the program. Red Ventures said it had also used the model to produce articles for other sites it owns including Bankrate and CreditCards.com.

Behind the news: CNET isn’t the first newsroom to adopt text generation for menial purposes. The Wall Street Journal uses natural language generation from Narrativa to publish rote financial news. Associated Press uses Automated Insights’ Wordsmith to write financial and sports stories without human oversight.

Why it matters: Text generation can automate rote reporting and liberate writers and editors to focus on more nuanced or creative assignments. However, these tools are well known to produce falsehoods, biases, and other problems. Publications that distribute generated content without sufficient editorial oversight risk degrading their reputation and polluting the infosphere.

We’re thinking: Programmers who use AI coding tools and drivers behind the wheels of self-driving cars often overestimate the capabilities of their respective systems. Human editors who use automated writing tools apparently suffer from the same syndrome.

Regulators Target Deepfakes

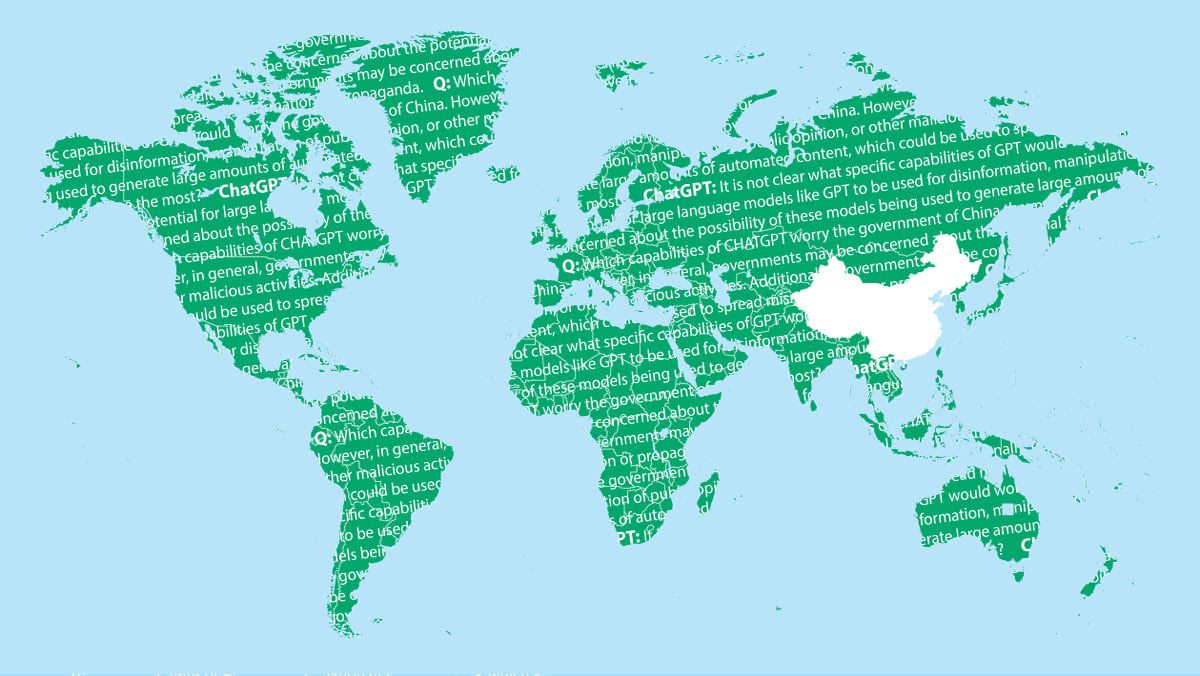

China’s internet watchdog issued new rules that govern synthetic media.

What’s new: Legislation from the Cyberspace Administration of China limits the use of AI to create or edit text, audio, video, images, and 3D digital renderings. The law took effect on January 10.

How it works: The rules regulate so-called “deep synthesis” services:

- AI may not be used to generate output that endangers national security, disturbs economic or social order, or harms China’s image.

- Providers of AI models that generate or edit faces must obtain consent from individuals whose faces were used in training and verify users’ identities.

- Providers must clearly label AI-generated media that might confuse or mislead the public into believing false information. Such labels may not be altered or concealed.

- Providers must dispel false information generated by their models, report incidents to authorities, and keep records of incidents that violate the law.

- Providers are required to review their algorithms periodically. Government departments may carry out their own inspections. Inspectors can penalize providers by halting registration of new users, suspending service, or pursuing prosecution under relevant laws.

Behind the news: The rules expand on China’s earlier effort to rein in deepfakes by requiring social media users to register by their real names and threatening prison time for people caught spreading fake news. Several states within the United States also target deepfakes, and a 2022 European Union law requires social media companies to label disinformation including deepfakes and withhold financial rewards like ad revenue from users who distribute them.

Why it matters: China’s government has been proactive in restricting generative AI applications whose output could do harm. Elsewhere, generative AI faces a grassroots backlash against its potential to disrupt education, art, and other cultural and economic arenas.

We’re thinking: Models that generate media offer new approaches to building and using AI applications. While they're exciting, they also raise questions of fairness, regulation, and harm reduction. The AI community has an important role in answering them.