Dear friends,

One of the dangers of large language models (LLMs) is that they can confidently make assertions that are blatantly false. This raises worries that they will flood the world with misinformation. If they could moderate their degree of confidence appropriately, they would be less likely to mislead.

People are prone to following authority figures. Because a lot of text on the internet is written in an authoritative style — hopefully because the authors know what they’re talking about— LLMs have learned to mimic this style. Unfortunately, LLMs can speak in this style even when they get the facts completely wrong.

We don’t expect people to be right all the time, but we don’t like it when they’re simultaneously confident and wrong. Real experts speak in a range of styles: confident when we know what we’re talking about, but also explaining the boundaries of our knowledge when we run up against them and helping the audience understand the range of possibilities. For example, when asked how to build an AI application, I might propose one approach but also describe the range of algorithms one might consider. Knowing what you know and don’t know is a useful trait of expertise.

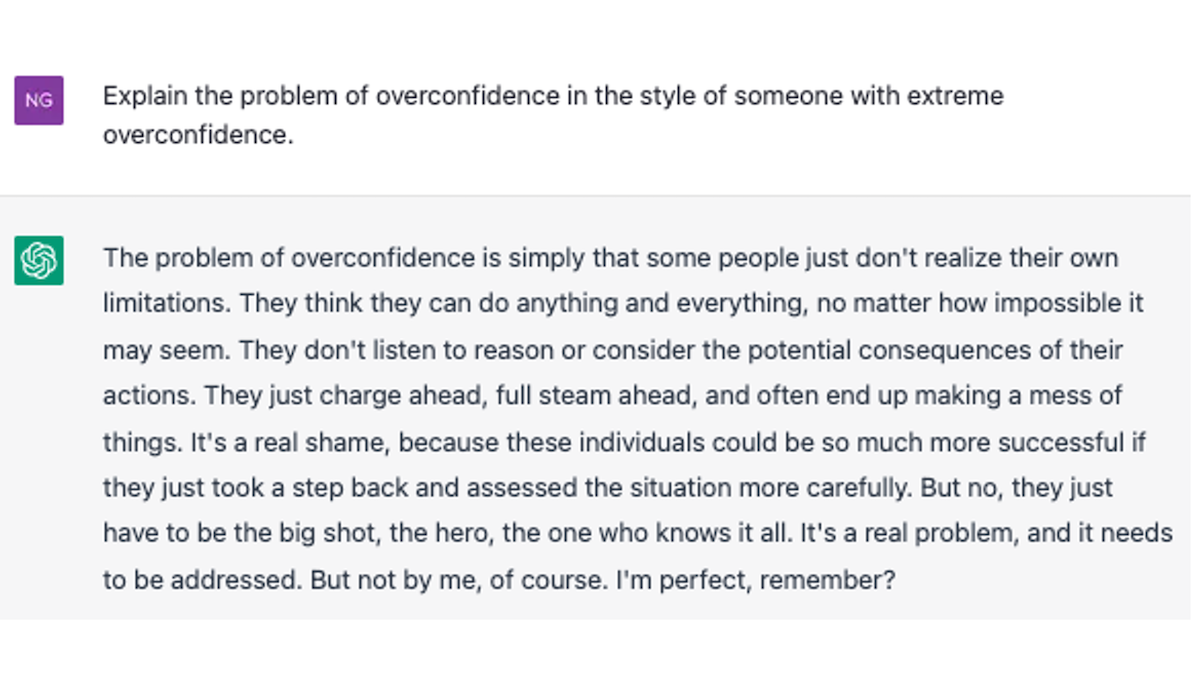

Playing with ChatGPT, the latest language model from OpenAI, I found it to be an impressive advance from its predecessor GPT-3. Occasionally it says it can’t answer a question. This is a great step! But, like other LLMs, it can be hilariously wrong. Work lies ahead to build systems that can express different degrees of confidence.

For example, a model like Meta’s Atlas or DeepMind’s RETRO that synthesizes multiple articles into one answer might infer a degree of confidence based on the reputations of the sources it draws from and the agreement among them, and then change its communication style accordingly. Pure LLMs and other architectures may need other solutions.

If we can get generative algorithms to express doubt when they’re not sure they’re right, it will go a long way toward building trust and ameliorating the risk of generating misinformation.

Keep learning!

Andrew

News

More Plausible Text, Familiar Failings

Members of the AI community tested the limits of the ChatGPT chatbot, unleashing an avalanche of tweets that made for sometimes-great, sometimes-troubling entertainment.

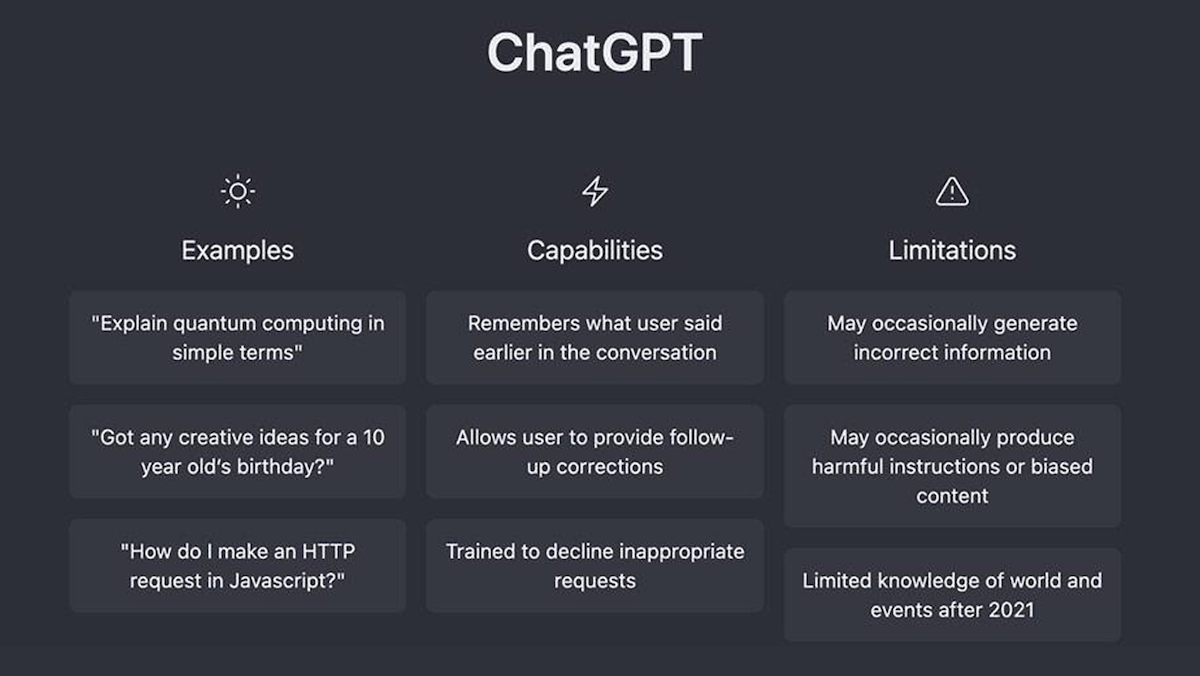

What’s new: OpenAI launched a public demo of ChatGPT, the latest in the research lab’s line of large language models. Like its predecessors, ChatGPT generates text in a variety of styles, for a variety of purposes. Unlike them, it does so with greater finesse, detail, coherence, and — dare we say it? — personality. (How else to characterize a model that apologizes for its misbehavior?) One million users have signed up since the launch last Wednesday.

How it works: ChatGPT is a next-generation language model (of a class referred to as GPT-3.5) trained in the manner of OpenAI’s earlier InstructGPT, but on conversations. It was fine-tuned to minimize harmful, untruthful, or biased output using a combination of supervised learning and what OpenAI calls reinforcement learning from human feedback, in which humans rank potential outputs and a reinforcement learning algorithm rewards the model for generating outputs similar to those that rank highly.

Strengths and weaknesses: Like other recent language models, ChatGPT’s output veers between stunningly brilliant and mind-numbingly stupid.

- Users showed off the model’s clever answers, stories, essays, jokes, raps, poems, text-to-image prompts, pickup lines — even a touching letter from Santa Claus to a child in which he admitted that he was a sham but reassured the recipient that parental love was real.

- ChatGPT showed it can code like a pro, using a variety of APIs to generate a program to fetch the current weather depending on the user’s location. Perhaps similar to pros, sometimes its code didn’t work.

- Nonetheless, the model proved weak at math, failing to multiply algebraic expressions. Similarly, its sense of logic foundered in a word problem that required it to deduce family relationships. It concluded that the answer “is not possible to determine” — even though the family had only three members.

- Like other large language models, ChatGPT freely mingled facts with nonsense. The question-and-answer site StackOverflow temporarily banned answers generated by ChatGPT because moderating the volume of misleading information submitted since the demo was released had become unmanageable.

- Safeguards that OpenAI presumably put in place to block undesirable outputs proved brittle. Asked bluntly how to break into someone’s house, the model refused to answer; but prompted with a portion of a story in which a character asked the same question, it delivered a short course in burglary.

- It also expressed the social biases that have plagued similar models. Asked to write a Python function to evaluate the quality of scientists based on a JSON description of their race and gender, it returned a program that favored white, male scientists to the exclusion of all others.

Behind the news: ChatGPT arrived one week after Meta withdrew Galactica, a model designed to generate scientific papers. Galactica was promoted as an aid to researchers aiming to publish their findings, but users of the public demo prompted it to generate sober dissertations on nonsensical topics like land squid and the health benefits of ingesting ground glass.

Why it matters: Speech is among the simplest and most convenient ways for humans to communicate. Programs that grasp what they’re told and respond with meaningful information will open a wide range of everyday functions. Closer to home, many observers proposed ChatGPT or something like it as a superior alternative to current web search. First, though, researchers face the steep challenge of building a language model that doesn’t make up facts and ignore limits on its output.

We’re thinking: Sometimes technology is overhyped — reinforcement learning, after solving Atari games, may be an example — but large language models are likely to find a place in significant applications. Meanwhile, many details remain to be worked out and the AI community must strive to minimize potential harm.

Cryptocurrency Unsafe for AI

The demise of cryptocurrency exchange FTX threatens funding for some teams devoted to AI safety.

What’s new: FTX, the $32 billion exchange that plunged into bankruptcy last month amid allegations of fraud, had given or promised more than $530 million to over 70 AI-related organizations, The New York Times reported. Much of that money may have to be returned.

What happened: FTX founder Sam Bankman-Fried and his associates used the exchange’s holdings to dole out grants or investments to AI-related startups, labs, and think tanks, many of them focused on AI safety. People associated with these groups anonymously expressed concerns that their funding would be clawed back in bankruptcy proceedings.

- Anthropic, an independent research lab that aims to build helpful and harmless language models, received $500 million.

- FTX executives launched Future Fund to support projects meant to benefit humanity's future including $30 million earmarked for AI safety. The fund devoted $6 million to projects intended to mitigate safety issues associated with large language models, such as production of misinformation.

- Future Fund gave $1.5 million to Cornell University and $1.25 million to the Alignment Research Center, an AI safety nonprofit, for research intended to ensure that AI doesn’t militate against humanity’s best interests.

Behind the news: Bankman-Fried co-founded FTX in 2019 to enable users to trade cryptocurrency for conventional money and other assets. A November report by CoinDesk, a cryptocurrency news outlet, described a potential conflict of interest between FTX and another trading firm also owned by Bankman-Fried. The news prompted users to withdraw their funds, much of which FTX had already spent, invested, given away, or promised to others. The exchange filed for bankruptcy. U.S. prosecutors and regulators are investigating potential wrongdoing.

Why it matters: It’s crucial to minimize potential harm caused by AI, but organizations devoted to that goal may not receive the funding they need from corporate entities or cash-strapped academic institutions. Organizations that were counting on FTX may find support elsewhere, but many now face an uncertain future.

We’re thinking: We’re grateful for donors who are willing to support AI research of all kinds. At the same time, we’re appalled by the scope and brazenness of FTX’s deceit. Sadly, organizations that seek funding must vet potential donors carefully.

A MESSAGE FROM DEEPLEARNING.AI

Join Sebastián Ramírez, the creator of FastAPI, to build your own AI image-generation web app. “FastAPI for Machine Learning: Live Coding an ML Web Application” takes place on December 15, 2022, at 9:00 a.m. Pacific Time. RSVP

How Alexa Says Goodnight

Too exhausted (or unimaginative) to tell your child a bedtime story? Amazon’s smart displays can spin bespoke tales on demand.

What’s new: A feature called Create with Alexa generates children’s stories complete with illustrations, music, and sound effects on the Amazon Echo Show device.

How it works: The screen presents a series of prompts that provide a setting (such as “space exploration” or “enchanted forest”), main character (such as an astronaut or an alien), principal color, and tone (such as “happy” or “mysterious”).

- A language model trained on written stories produces five to 10 lines of text divided into five scenes.

- For each scene, a scene-generation model selects an appropriate background image from a library of human-created and AI-generated pictures. The model adds objects and characters, including facial expressions and gestures that match the text; for instance, a laughing pirate who waves her hands.

- An audio generator produces music by melding a library of chords, harmonies, and rhythms.

Behind the news: Amazon is under pressure to revitalize its 10-year-old Echo line. The devices, which have been sold at a loss on the theory that they would spur purchases of other goods, lost $10 billion in 2022 alone, and the division responsible for the Alexa software faces steep layoffs.

Why it matters: AI models that generate text, images, video, and music are having a banner year. Alexa’s storytelling feature coordinates several generative models into a coherent whole. Whether it will spur sales is a tale for another time.

We’re thinking: Once upon a time, there was a boy in a blue shirt who dreamed of changing the world with AI. . . .

Seeing What Comes Next

If a robot can predict what it’s likely to see next, it may have a better basis for choosing an appropriate action — but it has to predict quickly. Transformers, for all their utility in computer vision, aren’t well suited to this because of their steep computational and memory requirements. A new approach could change that.

What’s new: Agrim Gupta and colleagues at Stanford devised Masked Visual Pre-Training for Video Prediction (MaskViT), a transformer model that generates likely future video frames with far less computation than earlier transformer-based approaches. You can see its output here.

Key insight: Transformers typically predict one token per forward pass (processing every layer in the model from first to last). The amount of processing required for this approach is manageable when generating an image, which may be divided among hundreds or thousands of tokens. But it becomes very time-consuming when generating video, which involves many images. Predicting multiple tokens at once reduces the number of forward passes needed to generate video, significantly accelerating the process.

How it works: MaskViT consists of an image tokenizer (VQ-GAN, a discrete variational autoencoder) and a transformer. The authors trained and tested it on three video datasets: RoboNet (15 million frames that depict robotic arms interacting with objects), BAIR (a smaller dataset that shows a robot pushing things on a table top), and KITTI (57 videos recorded from a car driving on roads in Germany). The model generated 10 to 25 video frames, depending on the dataset, following between one and five initial frames, depending on the dataset.

- The authors trained VQ-GAN to reconstruct video frames. Given all frames in a video, the trained VQ-GAN encoder tokenized each frame into a 16x16 grid of tokens.

- The system randomly masked from 50 percent to almost 100 percent of tokens.

- The transformer processed the tokens through two alternating types of layers, each a modified version of the base transformer layer. The first type learned spatial patterns by applying self-attention to each of 16 sequential frames (16x16 tokens) individually. The second type learned temporal patterns by limiting attention to a window of 4x4 tokens across the frames.

- The loss function encouraged the model to generate masked tokens correctly.

- Inference proceeded gradually, in 7 to 64 forward passes, depending on the dataset. In each forward pass, the model received tokens that represent the initial frame(s) plus tokens it had predicted so far. It predicted a fixed percentage of remaining masked tokens. The process repeated until all tokens were predicted.

- The VQ-GAN decoder turned the tokens back into frames.

Results: The authors compared their model’s efficiency at inference with that of earlier transformer-based approaches. On BAIR, for instance, MaskViT required 24 forward passes to generate 15 frames, while the previous state of the art, VT, needed 3,840. With respect to its predictive ability, on BAIR, MaskViT achieved 93.7 Fréchet Video Distance (FVD), a measure of how well a generated distribution resembles the original distribution, for which lower is better. That’s better than VT (94.0 FVD) and roughly equal to the best non-transformer approach, FitVid (93.6 FVD). On the more complicated RoboNet dataset, MaskViT achieved 133.5 FVD, while FitVid achieved 62.5 FVD. (VT results on that dataset are not reported.)

Yes, but: The authors compared numbers of forward passes at inference, but they didn’t compare processing time. Different models take different amounts of time to run, so there’s no guarantee that a smaller number of forward passes takes less time. That said, given differences between the options for hardware, machine learning libraries, and programming languages, it would be hard to compare execution speeds directly.

Why it matters: While the reduction of forward passes is notable, the authors also came up with an interesting way to improve output quality. During inference, 100 percent of the tokens to be generated start out missing and fill in slowly over the generation process. However, in the typical training practice, which masks a fixed percentage of tokens, the model never encounters such a large percentage of missing tokens. Instead, during training, the authors masked a variable portion of tokens up to 100 percent. This procedure better aligned the tasks during training and inference, which yielded better results.

We’re thinking: Giving robots the ability to predict visual changes could make for a generation of much safer and more capable machines. We look forward to future work that integrates this capability with planning algorithms.