Dear friends,

While working on Course 3 of the Machine Learning Specialization, which covers reinforcement learning, I was reflecting on how reinforcement learning algorithms are still quite finicky. They’re very sensitive to hyperparameter choices, and someone experienced at hyperparameter tuning might get 10x or 100x better performance. Supervised deep learning was equally finicky a decade ago, but it has gradually become more robust with research progress on systematic ways to build supervised models.

Will reinforcement learning (RL) algorithms also become more robust in the next decade? I hope so. However, RL faces a unique obstacle in the difficulty of establishing real-world (non-simulation) benchmarks.

When supervised deep learning was at an earlier stage of development, experienced hyperparameter tuners could get much better results than less-experienced ones. We had to pick the neural network architecture, regularization method, learning rate, schedule for decreasing the learning rate, mini-batch size, momentum, random weight initialization method, and so on. Picking well made a huge difference in the algorithm’s convergence speed and final performance.

Thanks to research progress over the past decade, we now have more robust optimization algorithms like Adam, better neural network architectures, and more systematic guidance for default choices of many other hyperparameters, making it easier to get good results. I suspect that scaling up neural networks — these days, I don’t hesitate to train a 20 million-plus parameter network (like ResNet-50) even if I have only 100 training examples — has also made them more robust. In contrast, if you’re training a 1,000-parameter network on 100 examples, every parameter matters much more, so tuning needs to be done much more carefully.

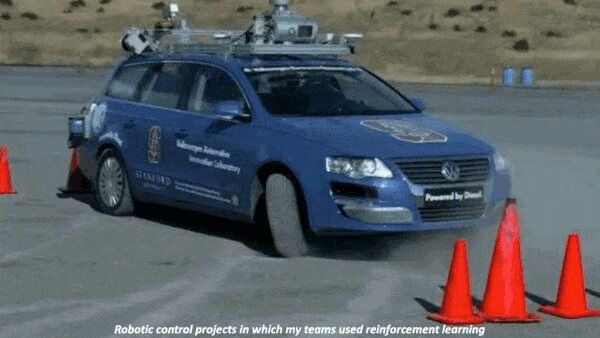

My collaborators and I have applied RL to cars, helicopters, quadrupeds, robot snakes, and many other applications. Yet today’s RL algorithms still feel finicky. Whereas poorly tuned hyperparameters in supervised deep learning might mean that your algorithm trains 3x or 10x more slowly (which is bad), in reinforcement learning, it feels like they might result in training 100x more slowly — if it converges at all! Similar to supervised learning a decade ago, numerous techniques have been developed to help RL algorithms converge (like double Q learning, soft updates, experience replay, and epsilon-greedy exploration with slowly decreasing epsilon). They’re all clever, and I commend the researchers who developed them, but many of these techniques create additional hyperparameters that seem to me very hard to tune.

Further research in RL may follow the path of supervised deep learning and give us more robust algorithms and systematic guidance for how to make these choices. One thing worries me, though. In supervised learning, benchmark datasets enable the global community of researchers to tune algorithms against the same dataset and build on each other’s work. In RL, the more-commonly used benchmarks are simulated environments like OpenAI Gym. But getting an RL algorithm to work on a simulated robot is much easier than getting it to work on a physical robot.

Many algorithms that work brilliantly in simulation struggle with physical robots. Even two copies of the same robot design will be different. Further, it’s infeasible to give every aspiring RL researcher their own copy of every robot. While researchers are making rapid progress on RL for simulated robots (and for playing video games), the bridge to application in non-simulated environments is often missing. Many excellent research labs are working on physical robots. But because each robot is unique, one lab’s results can be difficult for other labs to replicate, and this impedes the rate of progress.

I don’t have a solution to these knotty issues. But I hope that all of us in AI collectively will manage to make these algorithms more robust and more widely useful.

Keep learning!

Andrew

DeepLearning.AI Exclusive

Q&A With Andrew Ng

Andrew answers questions about the new Machine Learning Specialization including:

- How is it different from the original course?

- Who should take it?

- What does it offer to people at different stages of an AI career?

News

Where Drones Fly Free

Autonomous aircraft in the United Kingdom are getting their own superhighway.

What’s new: The UK government approved Project Skyway, a 165-mile system of interconnected drone-only flight routes. The airspace is scheduled to open by 2024.

How it works: The routes, each just over six miles wide, will connect six medium-sized English cities including Cambridge, Coventry, Oxford, and Rugby. They avoid forested or ecologically sensitive areas, as well as major cities like London and Birmingham.

- A consortium of businesses will install a ground-based sensor network over the next two years to monitor air traffic along the Skyway. The sensors will supply information to help the drones navigate, removing the need for fliers to carry their own sensors.

- The sensors will also feed an air-traffic management system from Altitude Angel, which will help the craft avoid midair collisions.

- The UK government is considering future extensions to coastal urban areas like Southampton and Ipswich.

Behind the news: Project Skyway is the largest proposed designated drone flight zone, but it’s not the only one.

- A European Union effort based in Ireland aims to develop an air-traffic control system for autonomous aircraft including those used for deliveries, emergency response, agriculture, and personal transportation.

- In March 2021, authorities in Senegal granted approval for drone startup Volansi to fly its aircraft outside of operators’ line of sight.

- The California city of Ontario established safe flight corridors for drones built by Airspace Link to fly between warehouses and logistics centers. The plan awaits approval by the United States Federal Aviation Administration.

Yes, but: Although Skyway includes a collision-avoidance system, it’s not designed to prevent accidents during takeoff and landing, when they’re most common. Moreover, it's not yet clear whether the plan includes designated takeoff and landing sites. “The problem is what happens when you're 10 feet away from people,” one aerospace engineer told the BBC.

Why it matters: Drones are restricted from flying in most places due to worries that they could interfere — or collide — with other aircraft. By giving them their own airspace, the UK is allowing drones to deliver on their potential without putting other aircraft at risk.

We’re thinking: Figuring out how to operate drones safely has proven one of the most difficult aspects of deploying them in commercial applications. This project is a big step toward ironing out the regulatory bugs and also provides a relatively safe space to address technical issues.

Large Language Models Unbound

A worldwide collaboration produced the biggest open source language model to date.

What’s new: BLOOM is a family of language models built by the BigScience Research Workshop, a collective of over 1,000 researchers from 250 institutions around the globe.

How it works: BLOOM is a transformer model that emulates OpenAI’s GPT-3. It was trained on a custom 1.6 billion terabyte dataset to generate output in any of 46 human languages and 13 programming languages.

- The BigScience team hand-curated much of the data in an effort to mitigate bias. For instance, team members filtered out a significant amount of pornographic content, which they believe is over-represented in other datasets.

- The team trained BLOOM to generate incomplete text one word at a time using Megatron-DeepSpeed, which combines a transformer framework and a deep learning optimization library for distributed training. Megatron-DeepSpeed accelerated training by splitting the data and model across 384 GPUs.

- BLOOM is available in six sizes from 350 million to 176 billion parameters. Anyone with a Hugging Face account can query the full-size version through a browser app.

Behind the news: BigScience began in May 2021 as a year-long series of workshops aimed at developing open source AI models that are more transparent, auditable, and representative of people from diverse backgrounds than their commercial counterparts. Prior to BLOOM, the collaboration released the T0 family of language models, which were English-only and topped out at 11 billion parameters.

Why it matters: Developing large language models tends to be the province of large companies because they can afford to amass gargantuan datasets and expend immense amounts of processing power. This makes it difficult for independent researchers to evaluate the models’ performance, including biased or harmful outputs. Groups like BigScience and EleutherAI, which released its own open source large language model earlier this year, show that researchers can band together as a counterweight to Big AI.

We’re thinking: Just over two years since GPT-3’s debut, we have open access to large language models from Google, Meta, OpenAI, and now BigScience. The rapid progress toward access is bound to stimulate valuable research and commercial projects.

A MESSAGE FROM DEEPLEARNING.AI

Course 3 of the Machine Learning Specialization, “Unsupervised Learning, Recommender Systems, and Reinforcement Learning,” is available! Learn unsupervised techniques for anomaly detection, clustering, and dimensionality reduction. Build a recommender system, too! Enroll now

Protection for Pollinators

A machine learning method could help chemists formulate pesticides that target harmful insects but leave bees alone.

What’s new: Researchers at Oregon State University developed models that classify whether or not a chemical is fatally toxic to bees. The authors believe their approach could be used to screen pesticide formulations for potential harm to these crucial pollinators.

How it works: The authors trained two support vector machines to classify molecules as lethal or nonlethal. The dataset was 382 graphs of pesticide molecules, in which each atom is a node and each bond between atoms is an edge, labeled for toxicity. The researchers used a different method to train each model.

- In one method, the authors translated each graph into a vector that represented structural keys, arrangements of atoms that biochemists use to compare molecules. For instance, one feature indicated that a molecule includes phosphorus atoms. The model took these vectors as input.

- In the other method, the model’s input was a vector that counted the number of occurrences of all possible chains of four connected atoms. Similarly toxic molecules may share similar numbers of such groups.

Results: The two models performed similarly. They accurately classified 81 to 82 percent of molecules as lethal or nonlethal to bees. Of the molecules classified as lethal, 67 to 68 percent were truly lethal.

Behind the news: Bees play a crucial role in pollinating many agricultural products. Without them, yields of important crops like cotton, avocados, and most fruit would drop precipitously. Numerous studies have shown that pesticides are harmful to bees. Pesticides have contributed to increased mortality among domesticated honey bees as well as a decline in the number of wild bee species.

Why it matters: Pesticides, herbicides, and fungicides have their dangers, but they help enable farms to produce sufficient food to feed a growing global population. Machine learning may help chemists engineer pesticides that are benign to all creatures except their intended targets.

We’re thinking: It’s good to see machine learning take some of the sting out of using pesticides.

Choose the Right Annotators

Classification isn’t always cut and dried. While the majority of doctors are men and nurses women, that doesn't mean all men who wear scrubs are doctors or all women who wear scrubs are nurses. A new method attempts to account for biases that may be held by certain subsets of labelers.

What's new: Mitchell L. Gordon and colleagues at Stanford introduced a method to control bias in machine learning model outputs. Their jury learning approach models a user-selected subset of the annotators who labeled the training data.

Key insight: A typical classifier mimics how an average labeler would annotate a given example. Such output inevitably reflects biases typically associated with an annotator’s age, gender, religion, and so on, and if the distribution of such demographic characteristics among labelers is skewed, the model’s output will be skewed as well. How to correct for such biases? Instead of predicting the average label, a classifier can predict the label likely to be applied by each individual in a pool of labelers whose demographic characteristics are known. Users can choose labelers who have the characteristics they desire, and the model can emulate them and assign a label accordingly. This would enable users to correct for biases (or select for them).

How it works: The authors used jury learning to train a classifier to mimic the ways different annotators label the toxicity of social media comments. The dataset comprised comments from Twitter, Reddit, and 4Chan.

- From a group of 17,280 annotators, five scored each comment from 0 (not toxic) to 4 (extremely toxic). In addition, each annotator specified their age, gender, race, education level, political affiliation, whether they’re a parent, and whether religion was an important part of their lives.

- BERTweet, a natural language model pre-trained on tweets in English, learned to produce representations of each comment. The system also learned embeddings for each annotator and demographic characteristic.

- The authors concatenated the representations and fed them into a Deep & Cross Network, which learned to reproduce the annotators’ classifications.

- At inference, the authors set a desired demographic mix for the virtual jury. The model selected 12 qualified annotators at random. Given a comment, the model predicted how each member would classify it and chose the label via majority vote.

- The authors repeated this process several times to render classifications by many randomly selected juries of the same demographic composition. The median rating provided the label.

Results: The authors evaluated their model’s ability to predict labels assigned by individual annotators. It achieved 0.61 mean average error, while a BERTweet fine-tuned on the dataset achieved 0.9 mean average error (lower is better). The authors’ model achieved fairly consistent error rates when estimating how annotators of different races would label examples: Asian (0.62), Black (0.65), Hispanic (0.57), White (0.60). In contrast, BERTweet’s error rate varied widely with respect to Black annotators: Asian (0.83), Black (1.12), Hispanic (0.87), White (0.87). The authors’ model, which focused on estimating labels assigned by individuals, also outperformed a similar model that was trained to predict decisions by demographic groups, which scored 0.81 mean average error.

Why it matters: Users of AI systems may assume that data labels are objectively true. In fact, they’re often messy approximations, and they can be influenced by the circumstances and experiences of individual annotators. The jury method gives users a way to account for this inherent subjectivity.

We're thinking: Selecting a good demographic mix of labelers can reduce some biases and ensure that diverse viewpoints are represented in the resulting labels — but it doesn’t reduce biases that are pervasive across demographic groups. That problem requires a different approach.